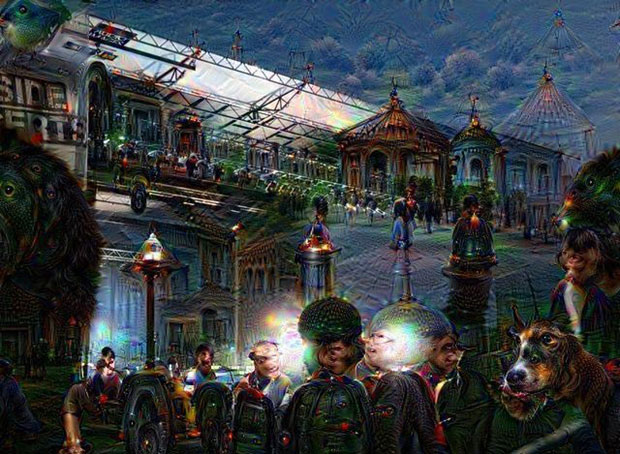

Buffalo as Seen Through Google Deep Dream

Last week, Google released an open source approximation of the code that allows their artificial neural network to classify images. By analyzing millions of images, the network develops its own idea of what common objects—like bananas and dumbbells—look like and over time gains the ability to interpret an image without human input—no matter the angle, lighting or any other obscuring factors.

That’s the idea, anyway: the code that Google released is designed to test the network’s capabilities by asking it to exaggerate the features it recognizes and alter images accordingly. The result: slugs, dogs, and a healthy dose of uncanny nausea. We decided to “Deep Dreamify” a selection of Buffalo icons and landmarks so that Google could spectacularize the ghoulish, phantom reality that exists right next to our own. Here are the results. To submit your own Deep Dream photos, post your photo to the social media platform of your choice with the hashtag #ThePublicDeepDream. We’ll be watching.

Scroll over the photos to reveal the original image:

Albright Knox photo by Kristy Rock.

Albright Knox photo by Kristy Rock.

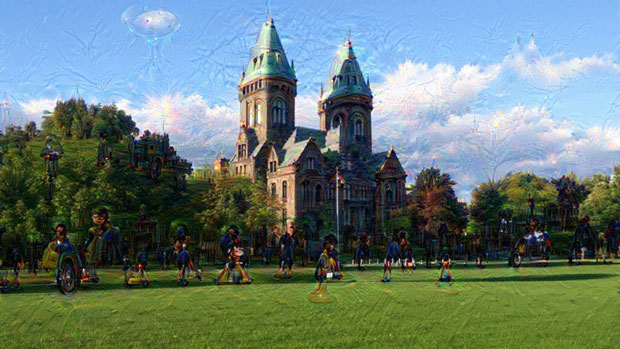

The Richardson Olmsted Complex photo by Wes Buehler.

The Richardson Olmsted Complex photo by Wes Buehler.

Central Terminal photo by Alex Stopper.

Central Terminal photo by Alex Stopper.

Canalside photo by Shauna Presto.

Canalside photo by Shauna Presto.

Niagara Snowman, The Public cover. Photo by Amanda Ferreira.

Niagara Snowman, The Public cover. Photo by Amanda Ferreira.

Best Summer Ever, The Public cover. Photo by Billy Sandora-Nastyn.

Best Summer Ever, The Public cover. Photo by Billy Sandora-Nastyn.

Untitled (Cindy, from MTC Series) by Robert Longo, The Public cover.

Untitled (Cindy, from MTC Series) by Robert Longo, The Public cover.

223 Allen St (The Old Pink) photo by Kristy Rock.

223 Allen St (The Old Pink) photo by Kristy Rock.

Shark Girl photo by Shauna Presto.

Shark Girl photo by Shauna Presto.

Silo City photo Kristy Rock.

Silo City photo Kristy Rock.

Widespread Panic at Artpark photo by Charles Dowd.

Widespread Panic at Artpark photo by Charles Dowd.

To Deep Dreamify your own photos, use one of these three websites.